Watch: Meloni’s AI warning highlights Europe’s fight against ‘nudification’ apps

Watch: Meloni’s AI warning highlights Europe’s fight against ‘nudification’ apps

Italy’s Prime Minister Sparks Debate on AI-Generated Nudity

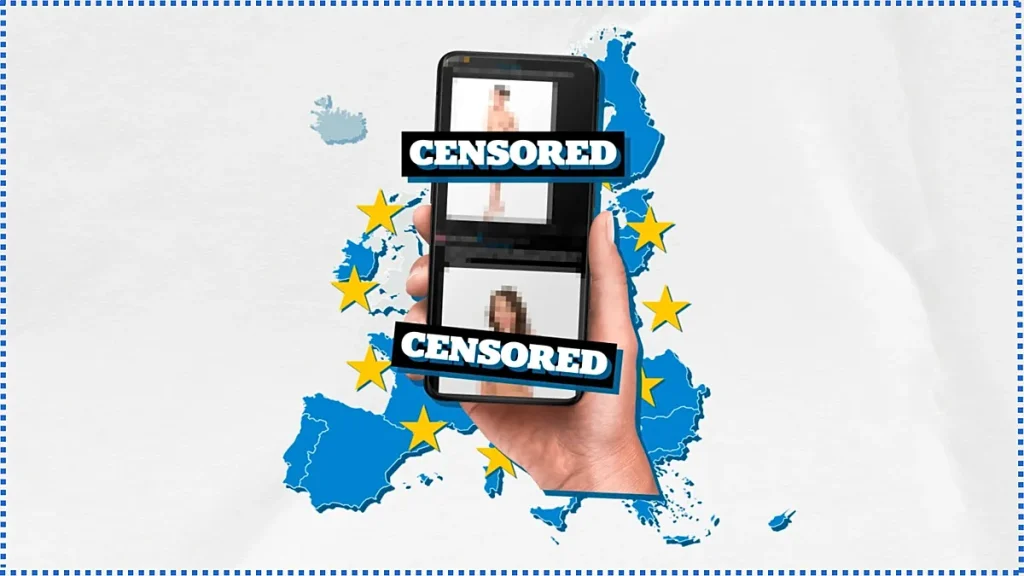

Watch: Meloni’s AI warning highlights Europe’s fight against ‘nudification’ apps – Giorgia Meloni, Italy’s prime minister, has become a focal point in Europe’s escalating battle against AI technologies that manipulate personal data. Earlier this week, she shared an AI-generated image of herself in underwear on social media, a deliberate act meant to underscore the vulnerability of even the most prominent public figures. The image, which had already gained traction online, served as a stark reminder of how easily digital tools can alter reality. “If this can happen to a head of government,” she implied, “it can happen to anyone.” Her move sparked discussions about the broader implications of AI in privacy and consent, positioning Italy as a key player in the EU’s regulatory efforts.

The image was not just a personal statement but a calculated political maneuver. Meloni’s team framed it as a warning to the public and policymakers about the risks of unregulated AI. In an era where deepfakes and synthetic media can distort public perception, such actions highlight the urgency of legislative measures. By using her own likeness to demonstrate the potential of these tools, she emphasized the need for stronger safeguards against their misuse. The incident also drew attention to the rapid evolution of AI technology, which outpaces traditional legal frameworks.

EU Tightens Regulations on ‘Nudification’ Apps

On Thursday, the European Union made a significant stride in addressing the issue of AI-generated explicit content. A landmark agreement was reached to ban “nudification” apps—software that transforms ordinary photos of individuals into sexually suggestive images without their consent. These apps, often used in cyberbullying, harassment, and even blackmail, have become a growing concern for both individuals and institutions. The EU’s decision reflects a shift in approach, prioritizing proactive measures over reactive responses to digital threats.

The ban is part of a broader revision of the EU’s AI Act, a cornerstone of its strategy to govern artificial intelligence. This legislation, which has been under development for years, now faces its most critical update yet. The new provisions aim to balance innovation with accountability, ensuring that AI developers adhere to strict guidelines while still fostering technological advancement. By targeting nudification apps specifically, the EU has signaled its commitment to protecting personal data from exploitation, a move that aligns with its digital rights agenda.

While public figures like Meloni may have legal teams and platforms to defend their reputations, the average citizen often lacks such resources. This disparity underscores the importance of the EU’s initiative, as it seeks to level the playing field. The measure is designed to empower individuals by giving them legal recourse against non-consensual content. Once fully implemented, it will require companies to remove AI-generated images that violate privacy standards, effectively reducing the spread of damaging material.

Speeding Up the AI Legislation Process

Historically, EU legislation has moved at a slow pace, with agreements often taking years to finalize. However, the urgency surrounding AI regulation has prompted a faster approach. The recent decision to ban nudification apps was reached in a matter of weeks, a sign of how pressing the issue has become. This accelerated timeline is a departure from the usual bureaucratic delays, reflecting a consensus that the risks of AI-generated content demand immediate action.

The revised AI Act is expected to be fully enforceable by December 2026, marking a pivotal moment for digital privacy in the bloc. For those affected before then, existing laws like the General Data Protection Regulation (GDPR) offer some safeguards. Under GDPR, individuals can invoke the “right to erasure,” a legal tool that allows them to request the removal of personal data, including AI-generated images that misrepresent them. This provision is particularly crucial for victims of deepfakes, who can now take steps to reclaim their digital identities.

Yet, the effectiveness of these protections hinges on enforcement. While GDPR provides a framework, its implementation can be complex, especially for smaller platforms or individuals unfamiliar with the process. The EU’s new rules will simplify this by creating clear guidelines for companies, ensuring they are held accountable for content they generate or disseminate. This is a major step forward, as it not only addresses the immediate threat of nudification apps but also sets a precedent for future AI regulations.

Real-World Impact of AI-Generated Fakes

The threat posed by AI-generated content is not hypothetical. At Euronews, we have witnessed firsthand the havoc these tools can wreak on public trust and individual dignity. Our journalists and reporters have been targeted by deepfake campaigns, where voices are altered, images are stolen, and misinformation is spread with alarming speed. One notable example involved a coordinated effort by Russian Today to disseminate synthetic media, blurring the lines between fact and fabrication.

“Our journalists and reporting have repeatedly been targeted by AI-generated fakes,” said an Euronews representative. “Voices are manipulated, images are stolen, and the impact is felt by both individuals and institutions.”

Such incidents are not isolated; they are part of a larger trend where AI tools are weaponized for disinformation. The EU’s ban on nudification apps is a response to this reality, aiming to prevent the proliferation of content that can harm personal and professional reputations. The legislation also highlights the need for a multi-faceted approach, combining technical solutions with legal frameworks to combat the spread of synthetic media.

The move to ban these apps is significant because it targets the root of the problem: the ability of AI to create content that appears authentic but is entirely fabricated. By imposing restrictions on the development and distribution of such tools, the EU hopes to reduce the number of victims and restore confidence in digital platforms. This is particularly important in a time when AI is increasingly used to manipulate public opinion, especially in political and social contexts.

As the EU continues to refine its AI policies, the focus remains on ensuring that technology serves as a tool for empowerment rather than exploitation. The ban on nudification apps is a clear indication of this priority, demonstrating the bloc’s willingness to adapt swiftly in the face of emerging challenges. While the road to full implementation may be long, the groundwork laid by this decision will shape the future of digital privacy in Europe. For now, the message is simple: individuals should not wait to act when they become victims of AI-generated fakes. Reporting incidents promptly is essential to protect one’s digital footprint and maintain the integrity of public discourse.

The European Union’s approach to AI regulation is now a model for other regions, proving that decisive action is possible even in a complex political landscape. As the world grapples with the implications of AI, the EU’s efforts to ban nudification apps stand as a testament to the importance of safeguarding personal data. This is not just about preventing embarrassment or harassment; it’s about preserving the very foundation of trust in the digital age.